Executive Summary

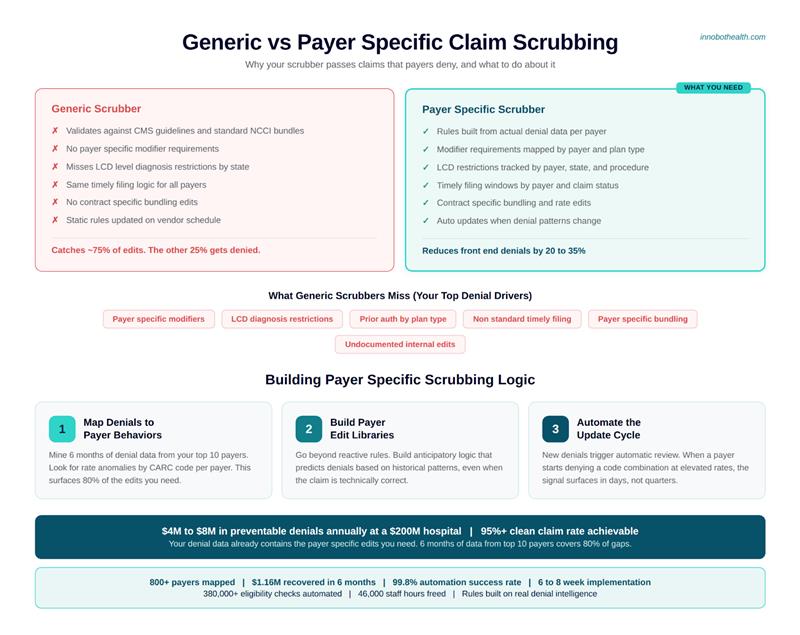

Generic claim scrubbers rely on standard industry rules, but fail to capture payer-specific requirements, leading to preventable denials and revenue loss. Mid-market hospitals can lose millions annually due to missed modifiers, diagnosis restrictions, and timely filing errors that generic systems don’t detect. These gaps result in increased denial rates, longer AR cycles, and unnecessary manual rework.

Payer-specific claim scrubbing addresses this by using real denial data to build dynamic rules tailored to each payer. With automated updates and targeted edit libraries, organizations can reduce front-end denials by 20–35% and improve clean claim rates significantly. Implementing a payer-focused rules engine within 6–8 weeks enables faster reimbursements, reduced operational costs, and stronger overall revenue cycle performance.

TL;DR — Key Takeaways

- Generic claim scrubbers validate against industry-standard rules, not the 847 custom edits your top five payers actually use.

- Payer-specific claim scrubbing can reduce front-end denials by 20–35%, without adding headcount.

- The average mid-market hospital loses $4–8M annually to preventable claim edits and timely filing write-offs that a payer rules engine would catch.

- Mapping payer-specific rules isn’t a six-month IT project, the right approach takes 6–8 weeks.

- If your scrubber passes a claim that Blue Cross denies on the back end, your scrubber doesn’t know what Blue Cross actually wants.

The Claim That Should Never Have Been Denied

Here’s a scenario every revenue cycle director has lived: your team submits a clean claim, correct diagnosis codes, correct procedure codes, correct UB04 formatting. Your scrubber signs off. The claim goes out the door.

Forty-five days later, you get a denial. Reason: payer edit failure. The payer wanted modifier 59 appended to a specific CPT combination. They’ve been requiring it since last July. You didn’t know because your scrubber doesn’t know what that payer actually requires.

This is the core failure of generic claim scrubbers, and why payer-specific claim scrubbing isn’t a nice-to-have for automated insurance verification healthcare systems. It’s the difference between getting paid in 30 days and chasing denials for 90.

Ready to stop leaving money on the table? Innobot’s automated medical billing software is purpose-built for the payer-specific rules your current system ignores.

Why Generic Claim Scrubbers Are Built for a World That Doesn’t Exist

Generic scrubbers are engineered against CMS guidelines, AMA code edits, and standard NCCI bundles. That’s a reasonable baseline, but payers don’t pay based on a reasonable baseline. They pay based on their own contracts, their own LCD policies, their own internal edit libraries, and sometimes, frankly, their own interpretation of what the rules mean this quarter.

Here’s what generic claim scrubbers don’t capture:

- Payer-specific modifier requirements (e.g., Aetna requiring modifier 25 on certain E&M codes even when technically unnecessary under standard guidelines)

- LCD-level diagnosis restrictions that vary by payer and by state

- Prior authorization flags that differ by plan type within the same payer, fully-insured versus self-funded plans under the same Blue Cross umbrella often have different rules

- Timely filing windows that range from 90 days (some Medicaid plans) to 365 days (most commercial payers) to 180 days for Medicare — and those windows reset differently for corrected claims

- Bundling edits that are payer-specific, not just CCI-driven

The math is ugly. A mid-market hospital billing $200M annually with a 3.5% front-end denial rate is writing off somewhere between $4M and $8M in claim edit failures per year, the majority of which are preventable if the scrubber actually knew the payer’s rules. That’s not a billing problem. That’s a data problem. Your scrubber is working off an incomplete ruleset.

What Payer Rules Actually Look Like in Practice

I want to give you a concrete example, because abstract discussions of “payer edit libraries” don’t do justice to how granular this gets.

Take knee replacement procedures, specifically CPT 27447 (total knee arthroplasty). For years, several Blue Cross plans were applying a blanket denial to 27447 claims with ICD-10 diagnosis codes in the M17.1x range (primary osteoarthritis of the knee) unless the claim also included:

- Documentation of failed conservative treatment (often expected as a remark code or attachment)

- A prior auth number tied to a specific plan-level policy, not just any auth number

- A revenue code on the UB04 that matched their internal facility fee schedule, not the standard 0360 most systems default to

A standard scrubber passes this claim. Blue Cross denies it. Your biller opens a denial that’s technically compliant by every standard rule, but wrong by that payer’s proprietary criteria. Your team spends 40 minutes on an appeal that should never have been necessary.

Multiply that by the thousands of payer-specific edits across your top 20 payers, and you start to understand why claim validation healthcare teams are drowning in denials that all look “clean” on paper.

What Most Teams Try (And Why It Doesn’t Scale)

The typical response to payer-specific denial patterns is tribal knowledge. Your best biller figures out that Cigna wants modifier 51 exempt status called out differently. She tells the new coder. The new coder tells the next hire. Six months later, half the team knows the Cigna rule and half doesn’t. Denials fluctuate. You can’t tell if you’re improving or just cycling through the same edits.

The second approach is manual payer policy tracking, usually a shared spreadsheet with payer bulletins, LCD updates, and contract-specific rules that someone updates whenever they get around to it. Which is never quite current enough. Payers update their edit libraries quarterly, sometimes monthly. CMS issues new transmittals. Your spreadsheet is always three news cycles behind.

The third approach is buying a better commercial scrubber. This helps, premium scrubbers do have broader edit libraries than basic ones. But even the best commercial scrubbers are built on industry-standard logic, not the specific contractual and operational preferences of your top payers. They’ll catch 75% of what needs catching. The remaining 25% still gets through and gets denied on the back end.

None of these approaches is wrong. They’re just not enough.

Wondering if your current setup qualifies as a real claim scrubber in healthcare, or just a compliance checkbox? Explore what a true claim scrubber actually does and see how your current scrubbing capability compares against payer-specific standards.

A Better Framework: Payer-Specific Claim Scrubbing That Actually Reflects Reality

The insight that changes how RCM leaders think about this problem is simple: your scrubber should know what each player actually denies, not what the industry says they should deny.

That means building and maintaining a payer rules engine that’s grounded in denial data, not just edit logic. Here’s what that looks like in practice:

1. Map Denials Back to Root Causes at the Payer Level

Most denial tracking systems bucket denials by CARC/RARC code and stop there. That tells you why a claim was denied in the abstract. It doesn’t tell you that Humana is currently flagging PT claims with a specific CPT-diagnosis combination at a 34% denial rate, which is what you actually need to build a useful edit.

The first step in building payer-specific scrubbing logic is mining your own denial history and attributing root causes to specific payer behaviors. Six months of denial data from your top 10 payers will surface 80% of the payer-specific edits you need to build.

2. Build Payer-Specific Edit Libraries (Not Just Rules Libraries)

An edit library is reactive: it checks whether a claim meets known requirements. A payer rules engine is anticipatory: it predicts whether this specific payer is likely to deny this claim based on historical pattern, even if the claim is technically correct.

This distinction matters because payers often deny claims for reasons that aren’t codified anywhere. They’re applying internal logic that isn’t published. Your rules engine needs to reflect your actual denial experience with that payer, not just their publicly stated policies.

3. Automate the Update Cycle

Payer policies change. LCD policies update. Contracts are renegotiated. If your payer-specific edit library is static, maintained by a human updating a spreadsheet, it will always be stale.

Automated claims compliance means building a workflow where new denials automatically trigger a review of whether a new edit is needed. When a payer starts denying a specific code combination at an elevated rate, that signal should surface within days, not quarters.

How to Evaluate Whether Your Current System Is Doing This

If you want to assess whether your current scrubbing solution is doing real payer-specific claim scrubbing, or just generic claim validation, ask your vendor these questions:

- How many payer-specific edit libraries do you maintain, and how often are they updated?

- Can you show me the denial rate differential between claims that pass your scrubber and claims submitted without scrubbing, by payer?

- How does your system handle payer policy updates that aren’t reflected in standard CCI or NCCI edits?

- Do you track payer-specific timely filing windows by plan type, not just by payer umbrella?

- How are contract-specific rules (from negotiated rates and terms) incorporated into the edit logic?

If the answers are vague, that’s your answer. You’re using a generic scrubber dressed up in better marketing language.

What to look for instead: A solution built on a continuously maintained payer intelligence database, not just an edit engine, that tracks real denial patterns, maps them to specific payer behaviors, and updates edit logic based on what’s actually happening in your AR. Innobot maintains rules across 800+ payers, built on a foundation of real-world denial intelligence, not just published policy documentation.

Real-World Results: What Payer-Specific Scrubbing Actually Delivers

- $1.16M recovered in 6 months for Aqua Derm through automated eligibility and claim validation workflows

- 380,000+ eligibility checks automated, eliminating the IVR calls and manual verification that create pre-claim errors

- 46,000 hours saved in manual processing, time your team can redirect to exception handling and appeals

- 99.8% automation success rate across claim validation workflows

- 6–8 week implementation (vs. the 6–12 months most enterprise RCM vendors quote)

A Practical Starting Point: The 60-Day Payer Intelligence Audit

You don’t need to overhaul your entire scrubbing infrastructure to start capturing payer-specific denial intelligence. Here’s a practical 60-day path to get there:

Days 1–15: Denial Root Cause Mapping

Pull your last 6 months of denial data and categorize by payer, denial reason, and claim type. Look for payer-specific patterns, the same CARC code appearing at an elevated rate from a specific payer is your signal. You’re looking for rate anomalies, not just volume.

Days 16–30: Edit Gap Analysis

Compare your current scrubber’s edit library against the denial patterns you’ve identified. For each pattern that your scrubber should have caught, but didn’t, you’ve found an edit gap. Document these with specificity: payer, CPT/ICD combination, frequency, average dollar value.

Days 31–60: Rules Engine Validation

For your top five payers (by denial volume or dollar impact), audit whether your current system has payer-specific logic, or whether it’s applying industry-standard rules uniformly. If it’s the latter, you know the size of the gap. That number is your business case for upgrading.

The Bottom Line on Payer-Specific Claim Scrubbing

Generic claim scrubbers aren’t bad tools. They’re just built for a world where all payers play by the same rules, and that world doesn’t exist. Your clean claim rate reflects the gap between what your scrubber validates and what your payers actually want.

The revenue cycle leaders who are consistently running 95%+ clean claim rates aren’t doing it with bigger billing teams. They’re doing it with payer-specific claim scrubbing logic that reflects real-world denial behavior, built on a continuously updated payer intelligence database, not a static edit library.

The good news: this isn’t a two-year infrastructure project. It starts with understanding where your current scrubber’s blind spots are, and those blind spots are sitting right there in your denial data, waiting to be found.

If your denial data is telling you that your current scrubber isn’t doing the job, or you want to find out, contact us for a 15-minute RCM assessment.