Executive Summary

You’ve got a denial queue with 4,000 claims in it. Your AR team is working it down, appealing what they can, writing off what they can’t, and somewhere in the back of your mind you know that about 60% of those denials were avoidable. You knew it when the claims went out. You just didn’t have a system to stop them.

That’s the denial prevention problem in one paragraph. Not the appeals process. Not the write-off policy. The fact that denial prevention strategies, real ones, applied upstream, remain an afterthought in most revenue cycle operations while teams burn hours downstream on work that should never have existed.

TL;DR — Key Takeaways

- Denial management is the wrong frame. By the time a claim is denied, you’ve already lost, prevention is where the money lives.

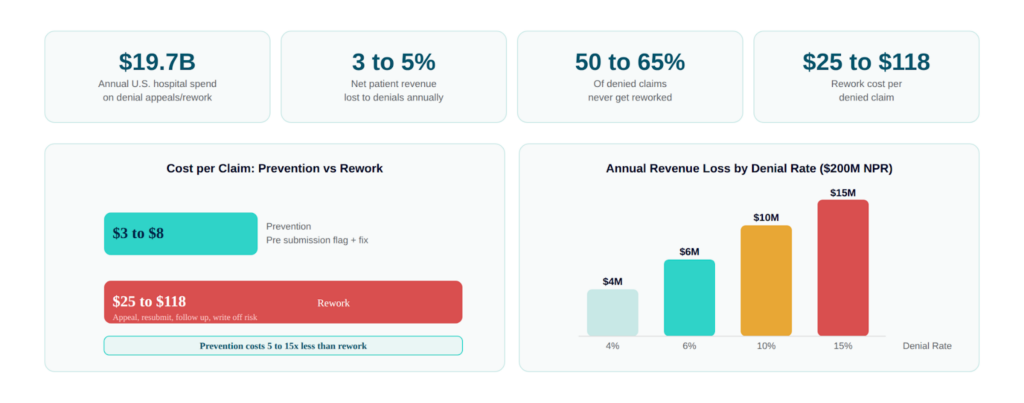

- The average U.S. hospital writes off 3–5% of net patient revenue to denials annually. Most of it is preventable.

- Reactive denial workflows (work the queue, appeal, resubmit) cost $25–$118 per claim to rework. Prevention costs a fraction of that.

- Predictive denial analytics lets you identify which claims are likely to be denied before they’re submitted, and fix them upstream.

- AI denial management doesn’t mean “magic black box.” It means systematic pattern recognition applied to your payer data, your claim history, and your specific denial trends.

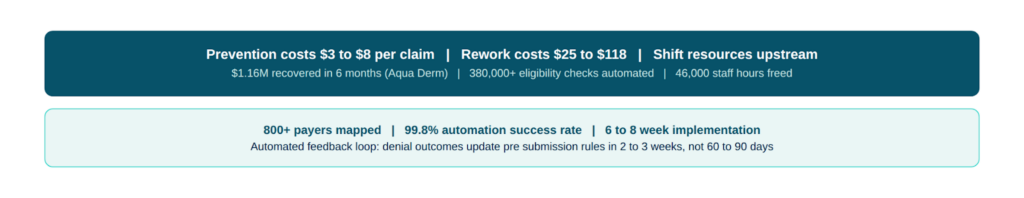

- Aqua Derm recovered $1.16M in 6 months after implementing automated front-end RCM processes, including denial prevention workflows.

You’ve got a denial queue with 4,000 claims in it. Your AR team is working it down, appealing what they can, writing off what they can’t, and somewhere in the back of your mind you know that about 60% of those denials were avoidable. You knew it when the claims went out. You just didn’t have a system to stop them.

That’s the denial prevention problem in one paragraph. Not the appeals process. Not the write-off policy. The fact that denial prevention strategies, real ones, applied upstream, remain an afterthought in most revenue cycle operations while teams burn hours downstream on work that should never have existed.

If your denial rate is above 5%, and for many mid-market health systems it’s sitting between 8–15%, you’re not facing a denial management problem. You’re facing a denial prevention problem that’s being handled as a denial management problem. That distinction is expensive.

Still handling denials through manual billing? Discover how Innobot’s automated medical billing software streamlines the entire billing cycle.

The Real Cost of Denials (Beyond the Write-Off Line)

Let’s start with numbers that should be in your next CFO conversation.

The American Hospital Association estimates U.S. hospitals spend $19.7 billion annually just on the administrative work of appealing and resubmitting denied claims. That’s not the write-off cost, that’s just the labor cost of fighting back. Add in actual denial write-offs, and the average health system is absorbing 3–5% of net patient revenue in denial-related losses every year.

For a $200M net patient revenue organization, that’s $6–10M annually. For a $500M system, you’re potentially looking at $15–25M.

Here’s the number that should sting more: MGMA data consistently shows that 50–65% of denied claims are never reworked. They just get written off. Not because your team doesn’t care, because the cost of reworking a $200 claim through three appeal levels doesn’t justify the labor investment. So the write-off happens quietly, quarter after quarter.

And the cost-per-denial math is brutal. Industry benchmarks put denial rework cost at $25–$118 per claim depending on complexity and payer. If you’re processing 10,000 claims per month with a 10% denial rate, that’s 1,000 denials per month, and somewhere between $25,000 and $118,000 in rework cost alone, before you factor in the revenue you don’t recover.

Now flip the math. Prevention-focused workflows that catch a denial risk before submission cost $3–8 per claim to implement. The ROI calculus isn’t complicated.

Why Denial Rates Keep Climbing Despite Better Technology

Here’s something counterintuitive: denial rates have been trending upward for most health systems even as EHR technology has improved and most organizations have moved to electronic claim submission. The reason is payer behavior, not provider behavior.

Payers have systematically expanded their denial criteria over the past decade. Prior authorization requirements have increased 293% since 2015 according to AMA data. Clinical documentation requirements have grown more complex. Payers have added new edit layers , medical necessity reviews, clinical validation denials, bundling edits, that didn’t exist five years ago.

Aetna, United, and Cigna have all expanded their automated prepayment review programs. What used to sail through adjudication now hits a clinical edit. And the edit criteria change , sometimes with notice, sometimes without, which means a claim that was clean last quarter gets denied this quarter on a criterion that wasn’t in your billing team’s awareness.

This is the environment where static denial management workflows fail. If you’re working from a denial prevention checklist that’s 18 months old, you’re fighting last year’s denial patterns with last year’s rules.

What Most Teams Try (And Why It’s Not Enough)

Before we get to what actually moves the needle, let me walk through the approaches I see most often, because if you’ve tried these and you’re still reading, you already know their limitations.

Denial Tracking Dashboards

Every major RCM system now has a denial dashboard. You can see denial rates by payer, by denial code, by facility, by provider. The problem is that a dashboard is a rearview mirror. It tells you what denied last month. It doesn’t tell you which claims in your current work queue are at elevated risk of denial before you submit them.

Dashboards are useful for identifying systemic patterns, “our CO-4 denials from Medicaid are up 30% this quarter”, but most organizations don’t have the analytical bandwidth to translate that pattern into a prevention workflow before the next batch of claims goes out. The insight sits in the dashboard while the claims keep denying.

Denial Committees

The monthly denial committee meeting is a fixture in mid-market health system RCM. Representatives from coding, billing, clinical documentation, and case management sit around a table, review the top denial categories, assign action items, and reconvene in 30 days.

I’ve run these meetings. I’ve sat in these meetings. The action items from November are still in someone’s inbox in February. Not because people are lazy, because the actual workflow change required to implement a denial prevention rule requires IT involvement, EHR configuration, training, and process documentation. That doesn’t happen in 30 days with a distributed team that has a day job.

Retrospective Payer Contract Analysis

Some organizations do periodic payer contract audits to identify where their denial rates are highest relative to contract terms. This is useful but slow, and by the time you’ve identified a pattern, negotiated a fix, and implemented a workflow change, you’ve absorbed 6–12 months of preventable denials.

The fundamental problem with all of these approaches is that they’re retrospective. They analyze what happened. Denial prevention strategies that actually reduce denial rates require prospective identification, catching the problem before the claim leaves your system.

A Better Framework: Predictive Denial Analytics and Where AI Actually Helps

Let me be specific about what I mean when I say AI denial management, because “AI” in healthcare IT has been so thoroughly abused as a marketing term that it’s reasonable to be skeptical.

I’m not talking about a machine learning model that claims to predict denials with 95% accuracy based on a dataset that doesn’t include your payers, your contracts, or your specific claim patterns. I’ve seen that pitch. I’ve been in those demos. The model looks impressive until you ask which version of the Cigna LocalPlus network it was trained on.

What I’m talking about is something more specific and more useful: systematic, automated pattern recognition applied to your historical denial data, your current claim queue, and real-time payer edit intelligence, with the output being a flag on a specific claim in your work queue that says “this claim has characteristics consistent with a CO-97 denial from United, here’s why, and here’s what to fix before you submit.”

That’s not magic. That’s pattern matching at scale. And it works because denial patterns, even when payers change their rules, tend to follow recognizable signatures.

What Predictive Denial Analytics Actually Looks At

Here’s the operational layer, because RCM leaders deserve specifics:

Claim-level risk factors: Procedure code and diagnosis code combinations that have been denied historically at rates above threshold. This is the most straightforward layer, if your system has denied 40% of CPT 27447 (total knee arthroplasty) claims from Blue Cross over the past 12 months, every knee replacement claim going to Blue Cross should get a pre-submission flag.

Payer-specific edit patterns: Different payers apply different edits, and those edits aren’t always documented in their provider manuals. A good denial prevention system maintains a payer edit library that captures observed denial patterns, not just published coverage policies , and matches incoming claims against that library before submission.

Documentation gap identification: A meaningful percentage of clinical validation denials and medical necessity denials trace back to documentation that was present in the clinical record but not captured on the claim. Automated denial prevention can flag claims where the documented diagnosis supports the procedure but the coding doesn’t reflect it, before the claim goes out.

Authorization status verification: The authorization adjacency problem is real. A claim that looks clean from a coding standpoint will deny if the auth wasn’t obtained, or if the auth obtained was for a different level of service than what was billed. Pre-submission auth verification against your claim detail catches this before it becomes a CO-197 denial.

Timely filing risk: This one seems obvious but it’s a consistent revenue leak in high-volume environments. Claims that have been sitting in a work queue for 45+ days on a payer with a 90-day timely filing limit need to be prioritized and flagged, not discovered during a denial review three weeks after they went out past the window.

Most denial workflows react after claims fail. See how Innobot’s automated denial management software helps prevent denials before claims are submitted.

Building the Feedback Loop: Where “Learns From Corrections” Actually Means Something

Here’s the piece that most denial prevention systems miss and that makes a meaningful difference over time: the feedback loop between denial outcomes and pre-submission rules.

When a claim is denied, even after your prevention layer reviewed it, that denial outcome needs to feed back into your rule set. If United starts denying a CPT code combination that sailed through last quarter, your system should detect that pattern within a few weeks of denials hitting the queue and automatically elevate the risk score for similar claims going forward.

This isn’t sophisticated artificial intelligence. It’s a correction loop, the same logic your best denial analyst applies manually when she notices a new denial pattern, it’s just automated and operating at the speed of your claim volume rather than the speed of a monthly denial committee meeting.

The difference in practice: a manual correction loop might catch a new denial pattern in 60–90 days. An automated feedback system catches it in 2–3 weeks. On a 90-day timely filing window, that 6-week difference can mean the difference between a recoverable denial and a write-off.

Revenue Loss Prevention: The Downstream Impact Nobody Calculates

Most organizations measure denial prevention success by denial rate. That’s the right metric, but it understates the full financial impact of a functioning prevention program. Here’s what else changes when denial prevention works:

Days in AR compresses. Clean claims pay faster. When your pre-submission review catches errors before submission, your first-pass resolution rate improves, and first-pass resolution is the single biggest driver of days in AR. Organizations that move their clean claim rate from 85% to 95% typically see 5–12 day reductions in overall AR days.

Rework labor cost drops. Every denial that doesn’t happen is a rework event that doesn’t consume billing staff capacity. In a department running 10–20% denial rates, a meaningful portion of your billing staff’s day is denial rework rather than clean claim processing. When that ratio shifts, you either free up capacity for higher-value work or reduce overtime costs, both are real numbers.

Write-off reduction compounds over time. This is the one that shows up most clearly in year-over-year financial analysis. Write-off reduction from denial prevention isn’t a one-time recovery event, it’s a structural change in your revenue capture rate that compounds. A $3M annual write-off reduction doesn’t just save $3M this year; it changes your baseline for every subsequent year.

Appeal and administrative cost decreases. This connects back to the $19.7B industry-wide figure. When you’re preventing more denials, you’re also reducing the volume of claims that enter the appeals workflow, which reduces the administrative cost burden on your billing team and your clinical documentation staff who are frequently pulled into appeals support.

Learn how denial management services help prevent claim denials, recover lost revenue, and stop payers from keeping the money you’ve earned.

What to Look For When Evaluating Denial Prevention Solutions

If you’re evaluating AI denial management solutions, or any denial prevention technology, here are the questions that separate vendors who understand your operational reality from vendors who understand how to run a demo.

On the Data Foundation

- What historical denial data does your system train on, and is it specific to my payer mix and service lines, or is it generic industry data?

- How quickly does your rule set update when payers change their denial criteria? Ask for a specific example of a payer edit change and how their system responded.

- Can you show me the denial pattern library for the top 5 payers in my current mix? Ask for specifics, which CPT codes, which diagnosis code combinations, which edit types.

On Pre-Submission Workflow Integration

- Does the denial prevention flag appear in my billing team’s actual workflow, in the EHR or billing system, or does it require them to work in a separate system?

- What does a flagged claim look like to the billing specialist? Is the flag actionable (here’s what to fix) or just informational (this claim is at risk)?

- How does the system handle payers that don’t publish their edit criteria? This is where real operational knowledge separates from vendor positioning.

On Measurable Outcomes

- What denial rate improvement should I realistically expect, and over what timeline? “Up to 30% reduction” is not an answer. Ask for median outcomes from similar organizations.

- Can you project ROI based on my actual denial data? Any vendor worth evaluating should be able to run your denial history through their model and show you specific recoverable revenue, before you sign a contract.

- What does your 6-month outcome look like for an organization at my volume and denial rate?

On Implementation Reality

This is where deals look great in procurement and fall apart in deployment. Ask:

- What does week-by-week implementation look like?

- What EHR and billing system integrations do you support natively versus through workarounds?

- What’s your average time from contract to go-live? (Industry norm is 6–12 months. The right answer is 6–8 weeks, and ask for references.)

The Aqua Derm Benchmark: What “Working” Actually Looks Like

Aqua Derm came to us with a familiar problem: multi-location practice, mixed payer environment, front desk staff stretched thin, and a denial pattern that had been quietly absorbing revenue for years without a systematic fix.

Six months after implementation:

- $1.16M in revenue recovered — not projected, recovered

- 380,000+ eligibility checks automated — removing the manual verification burden that was feeding the denial pipeline

- 46,000 staff hours reclaimed — redirected to higher-value work

Implementation completed in 6–8 weeks — not the 9-month integration project they’d been quoted elsewhere

The $1.16M recovery wasn’t magic. It was what happens when eligibility gaps, pre-submission denial risk, and automated claim review work together instead of as disconnected manual processes. Revenue loss prevention at the front end of the revenue cycle compounds into real numbers faster than most organizations expect.

The Bottom Line: Denial Prevention Is a Strategy, Not a Dashboard

If you take one thing from this article, make it this: denial prevention strategies are not a reporting exercise. A dashboard that shows you what denied last month is useful for identifying patterns. It is not a prevention program.

A real denial prevention program operates before the claim leaves your system. It knows which payer/procedure combinations are currently denied at elevated rates. It flags documentation gaps before they become CO-56s. It catches auth mismatches before they become CO-197s. It identifies timely filing risk before the window closes. And it gets smarter over time as denial outcomes feed back into the rule set.

The organizations that are moving from 10–12% denial rates to 4–6% denial rates aren’t doing it with better denial committees or more aggressive appeals. They’re doing it by shifting resources upstream, building pre-submission review into the claim workflow rather than treating denial rework as an inevitable cost of doing business.

You already know this. The question is whether you have the system in place to act on it.

Contact us to learn how automated denial prevention can reduce denials and recover lost revenue.